This is the Redshift database you want to connect to. IMPORTANT: Make sure to NOT include the port at the end of the Redshift endpoint string. See where this is located in the screenshot below. You can find this by selecting your cluster in the Clusters page of the Amazon Redshift console.

Host: Enter the endpoint of your Redshift cluster, excluding the port at the end.On the next create connection page, enter the following values: Once you’ve entered these values, select Save and Add Another. Password: Enter your Secret access key from the IAM User credentials you downloaded earlier.Login: Enter your Access key ID from the IAM User credentials you downloaded earlier.On the create connection page, enter the following values: Click on the Admin tab and select Connections.We run Airflow locally, open in Google Chrome (other browsers occasionally have issues rendering the Airflow UI).Here, we’ll use Airflow’s UI to configure your AWS credentials and connection to Redshift. We are going to create a working directory here but you can create anywhere else in your file system if you like. Navigate to your Desktop in your Terminal. PART 1 - Airflow installation (automatic)

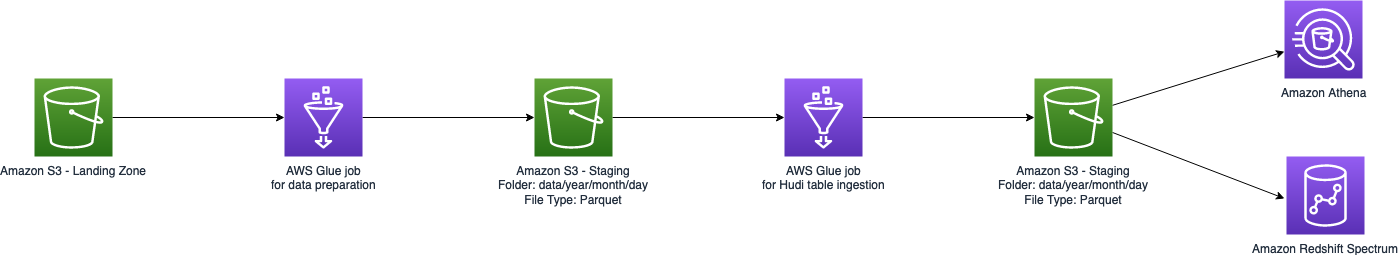

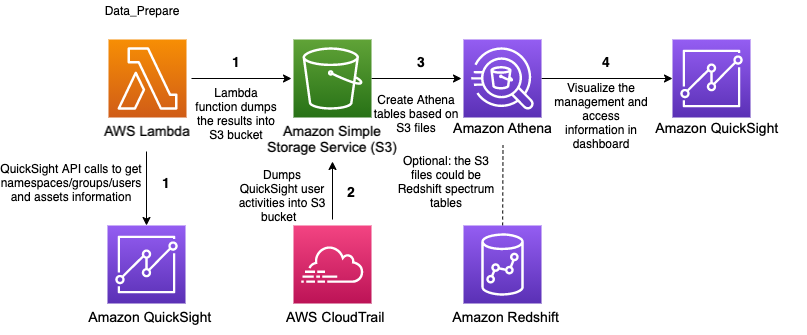

We would like to construct a flow like this Requirementsįor this project, you’ll be working with two datasets.

#Aws million song dataset import to redshift install#

There are different ways to install Airflow, I will present two ways, one is given by the using of containers such Docker and the other manual.ĭepending your purposes I will show both of them.įor the Project of Udacity is easier follow the automatic but if you want to keep more control for deployments of your work. Thus, you won’t need to write the ETL yourselves, but you’ll need to execute it with your custom operators. You’ll be provided with a helpers class that contains all the SQL transformations. The template also contains a set of tasks that need to be linked to achieve a coherent and sensible data flow within the pipeline. We have provided you with a project template that takes care of all the imports and provides four empty operators that need to be implemented into functional pieces of a data pipeline. To complete the project, you will need to create your own custom operators to perform tasks such as staging the data, filling the data warehouse, and running checks on the data as the final step.

This project will introduce you to the core concepts of Apache Airflow. The source datasets consist of JSON logs that tell about user activity in the application and JSON metadata about the songs the users listen to. The source data resides in S3 and needs to be processed in Sparkify’s data warehouse in Amazon Redshift. They have also noted that the data quality plays a big part when analyses are executed on top the data warehouse and want to run tests against their datasets after the ETL steps have been executed to catch any discrepancies in the datasets. They have decided to bring you into the project and expect you to create high grade data pipelines that are dynamic and built from reusable tasks, can be monitored, and allow easy backfills. Data Pipelines with Airflow with Redshift and S3Ī music streaming company, Sparkify, has decided that it is time to introduce more automation and monitoring to their data warehouse ETL pipelines and come to the conclusion that the best tool to achieve this is Apache Airflow.